The Real Tech Revolution in the Legal Profession (WORDS x NUMBERS)

- - By : JPG

- Date : 23-Nov-17

- Leave a comment

Much has been said about Artificial Intelligence and its impact in the legal profession, some futurologists even claiming that the profession will be totally replaced by robots and/or computers in the future and this has caused a “frisson” and aroused much insecurity in many professionals.

Much has been said about Artificial Intelligence and its impact in the legal profession, some futurologists even claiming that the profession will be totally replaced by robots and/or computers in the future and this has caused a “frisson” and aroused much insecurity in many professionals.

I do not agree with this categorical statement, but I do agree with the revolution that those new technologies are now imposing. In my humble opinion, I believe that this technological wave will indeed make a revolution, not only in the legal profession, but in all professions called human and that still heavily rely on the interaction of human beings in order to be exercised.

First of all, we must dismember the term “Artificial Intelligence”, which is nothing else than a series of technological advances that are enabling the application of several theories and statistical models, some of them already existing for many years.

Let us start with the Moore’s law.

According to Moore’s prediction, in 1975, the processors (chips) would double their capacity every 12 months, with their size halved and this fact has been verified over all those years and must reach a physical limit of size (measured in nanometers) around 2025. This exponential increase in the processing capacity has allowed what was once unthinkable, mainly when it comes to words, images and video, which requires a “muscle” greatly superior to that needed in order to process numbers.

Another very important item relates to the time that industry in general dedicated itself to the development of tools for the processing of numbers or other information and that is closely linked to the previous item.

The analysis tools of the database started to be developed in the 60s and it was in 1970 that IBM introduced the concept of relational archive and SQL (Structured Query Language) and in 1980, the Oracle released Oracle 2 and that together revolutionized the way numerical data were treated.

It was only at the end of the 90s that Google came to revolutionize the way we get words in Internet (Google search was a great success in 1998) and this gap of nearly 40 years partly explains why only now the tools that deal with words begin to appear.

Still on the trace of the hardware development, we will have to refer to the evolution of the communication speed. The Internet2 with a theoretical speed of 10Gbps (this means that a DVD with a capacity of 4,7Gb can be transferred in 3,5 seconds); the “IPv6” address protocol will enable 79 octillion times the current capacity of addressing the “IPv4” protocol and, at last, the 5G technology, whose rollout in Brazil is estimated to occur in 2018, has 10 times the speed of 4G, just to give three examples.

According to IBM information, 88% of existing information today and which are available in internet are still inaccessible to the digital and statistical addressing (which was the big leap generated in the 60’s with the database), since they talk about words, images and sounds. Also, according to it, Watson currently has the capacity to process (and interpret) 800 million documents in just one second!

All this technological progress allowed the research and the development industry to focus on solving the difficulties that we have (so far) in the digital processing of the so-called “human natural language”, besides the simple fast word searcher.

There are dozens of statistical algorithms (some have been existing for years) such as: decisions trees, “clustering”, “ensemble”, regressions, “NaiveBayes”, etc, associated to the dictionaries of meanings and synonyms, having allowed the computers to interpret the words inserted in a given context, and, as a consequence, interpret the meaning of the whole text.

These or other (no matter which) algorithms allow some corrections in their responses, so that, as the results are displayed, the system will be improved and the accuracy level of the responses will be increased. These corrections can be done by human beings (“supervised machine learning”) or automatically by the computer through statistical analysis. (unsupervised machine learning”)

The example I often use refers to how a machine (set of hardware and software) can correctly seek and differentiate in the context when a lawyer searches for the word “mandado” (warrant or ordered). Let us take as an example two electronically stored texts and in one of them we find the sentence: the judge had ordered the carrier to look for his clothes at his house, and, in the other one, the sentence: the judge issued a search and seizure warrant in the house, and the lawyer searches for words containing judge, warrant and house. Only through algorithms and analysis of the rest of the text that a “smart searcher” will differentiate the two sentences and bring the correct one.

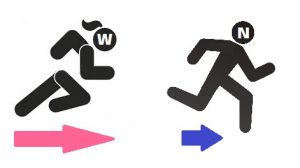

As I have already mention, in my humble opinion, the great revolution that is happening in law (and in all other human professions) is the capacity to digital and statistically handle the words, that is, “The words are coming closer to the numbers”!

ILTACON 2017 – THE EXPECTATIONS ADJUSTMENTS IN TECHNOLOGICAL TRENDS FOR THE LEGAL MARKET

- - By : JPG

- Date : 02-Nov-17

- Leave a comment

During the week between August 13 and August17, the city of Las Vegas held the fortieth edition of ILTACON, in which I had the honor of being the first Brazilian to participate as a “speaker” (after 20 years participating as a listener), where the new collaboration platforms for the legal market were discussed.

The theme “Artificial Intelligence” is still the current buzzword, but, in this edition of ILTACON, the issue was discussed with much more serenity, after the frisson that took place in the previous edition. Not that the issue had lost its importance, but by the fact that the companies have realized that adopting this concept is not the panacea for all the problems, much less the only solution that is going to solve all of them at the same time. Although IBM has presented, in one of the opening sections, the immense capabilities of Watson, the truth is that we are still fairly far away from the capabilities of Hal (for the ones who remember the Kubrick film) or the boy David (Al film).

As I had already written in previous articles, the so-called Artificial Intelligence, or more specifically, Cognitive Intelligence, is nothing more than the set of technological developments that have emerged in recent times and that, together or separately, has helped people in some specific tasks that, until recently, was only possible by the human brain.

We can list the following new techniques (some of them are not so new, but are being continuously improved): facial recognition, voice recognition, natural language recognition (Alexia, Siri and Google Voice Search, still embryonic),transformation of verbal language into text and vice versa, statistical algorithms of simulation and prediction (Regression, Decision Tree, VMS, Naïve Bayers, KNN, Random Forest, etc). and more specifically for the legal market (which is what interest us) the algorithms of semantic interpretation of texts that enable contextual analysis and the extraction of the subject to which a particular text refers to.

Out of the huge amount of digital information that currently exists, about 88% of them are still inaccessible to interpretation and to the digital addressing (according to an appraisal made by IBM), as they are information referred to as unstructured, that is, texts, videos and sounds.

The professions referred to as humanities or non-exact, such as Law and Medicine, deal with those pieces of information and the technology to address them was not able to develop with the same speed it was developed when addressing numeric information.

The first programs to address the so-called databases emerged in the mid 60’s and have been highly developed since then and they became more user-friendly (in the past only DBA’s were able to extract information from the databases, currently with the help of the systems such as “Tableau” or “Qlik”, almost any user can do so without a great deal of training.) On the other hand, the search tools per word only came up in the 90’s (Google was a big success back in 1998) and the first search engines for the legal market emerged about one decade ago, however, with no intelligence at all to notice when a user searches for the word “letter” he may also be searching for the word “correspondence”, “memorandum” or “memo”, etc. For this little intelligence to operate, a programmer and a lawyer need to work together and they should create libraries of specific synonyms and should register them in the system, making them, despite being so good, laboriously limited.

Due to this delay or inability of technology (the difference does not matter) to solve these difficulties, the non-exact professions still much depend on the human interpretation of the texts, sounds and videos, making them relatively protected from the technological “attack.” So far!

The real big revolution that has already started still shows its first steps, but it is inevitable; this is what I call as : the approximation of the words to the numbers”. When the new technologies are able to statistically address the words in the same way they address the numbers and/or when a computer is able to fully understand all the nuances of the human language and interpret it (with affordable costs to everyone), everything will be different. Companies, such as RAVN and KIRA (just examples), have already developed contractual analysis products incorporating those new technologies and making the life of the lawyer much more agile. There is still a long path to be taken, but, surely, the way how some professions are performed, will be radically changed.